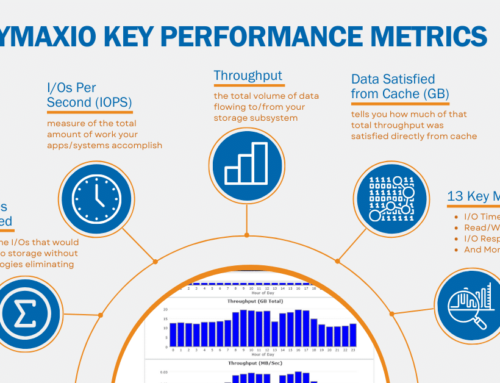

The latest versions of V-locity® (for virtual servers) and Diskeeper® (for physical servers and PCs) both contain built-in dashboards that show the exact benefit of the product to any one system or group of systems by showing how much and what percentage of read/write traffic is offloaded from storage and how much “I/O Time” that saves.

To understand the computation on “I/O Time Saved,” in its simplest form, the formula is essentially:

Storage I/O Time Saved = Total I/Os Eliminated * Average I/O Response Time

In essence, if you take Total I/Os Eliminated from the dashboard Benefits screen and multiply it times the average latency from the I/O Performance dashboard screen, you will generally end up in the ballpark of the “I/O Time Saved.”

I/O counts and I/O times are accumulated on a per I/O basis. Every I/O that goes to storage is timed using Windows High Performance Counters for accuracy. That timing is from when the I/O is sent down the stack until it comes back up. In essence we time I/O response time (IORT) or latency that the application sees, not the storage device. We also track reads and writes separately as they impact the storage “I/O Time Saved” differently.

The data is accumulated and calculated during periods of time rather than across the entire reporting period. In the long term, that period of time ends up being hourly. Very active I/O periods will have longer IORTs and therefore the amount of I/O storage time saved per I/O eliminated will likely be greater than during relatively light periods.

If there is a high queue depth, the IORT we time will be larger than the per I/O storage IORT. We look at the effective IORT the application would see rather than the time the underlying storage takes to process any single I/O. After all, the user only cares about how long the application took to process an I/O he/she requested, not how long a HDD or SSD took for any single I/O when it got around to processing it.

Let’s talk for a moment about storage “I/O Time Saved” versus clock time because they are not the same and our technologies can, in some cases, save far more storage I/O time than clock time.

If all storage I/O was sequential for the entire instance of the operating system, then the maximum amount of storage “I/O Time Saved” would be the amount of time since installation, and you would expect it to be considerably less as we are unlikely to eliminate ALL I/Os. And you might expect some idle time. Of course, applications do not do pure sequential I/O. Modern applications are almost always multi-threaded and most computer systems are running multiple applications or instances of them at the same time. Also, other operations are happening on the system outside of the primary application. Think of Outlook running in the background while you do some other work on your system. Outlook is constantly receiving updated data. Windows is also processing lots of I/Os in the background just for it to be able to continue operations. These I/Os happen in parallel to any I/Os that users may be doing with an application.

In general, there are lots of I/Os that are being processed at the same time. You would not want to work on a computer system where only a single I/O was being processed at any one point in time as it would be VERY slow. If the average queue depth would have been 5 without us but 2 with us, that means every time 2 I/Os go through to storage, we would have eliminated 3 I/Os. The end result would be a storage “I/O Time Saved” of somewhere between 1.5-3x clock time, depending on how the underlying storage processed the I/Os.

Another factor that contributes to the possibility of storage “I/O Time Saved” exceeding of clock time is the reduction of split I/Os. Let’s say that without our product all I/Os actually end up being split into 3 I/Os due to Windows writing files in an excessively small, fragmented manner. After installing our product, by displacing small, tiny writes with large, contiguous writes, each of those I/Os that had to be split into 3 are now being completed as a single I/O. If that was the normal case, the storage “I/O Time Saved” for each I/O would be roughly 2x the actual storage I/O time due to prevention of fragmentation.

Leave A Comment

You must be logged in to post a comment.