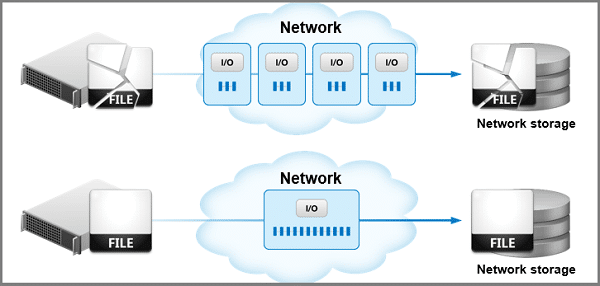

Random I/O versus Sequential I/O

To demonstrate the performance difference of I/O patterns, put yourself in a Veterinarian’s office where all the data is still stored on paper in file cabinets. For a single animal (billing, payments, medication, visits, procedures…), it is all stored in different folders and placed in different cabinets according to specific categories, like Billing and Payments.

To get all the data for that one animal, you may have to retrieve 10 different folders from 10 different cabinets. Wouldn’t it be easier if all that data was in a single file so you can retrieve it in one single step? This is basically the difference between Random I/O and Sequential I/O.

Random I/O Is Much Slower

Accessing data randomly is much slower and less efficient than accessing it sequentially. Simply, it is faster to write/read the same data with a single sequential I/O operation rather than multiple, say 25, smaller random I/Os.

- For one, the operating system must process all those extra I/Os rather than just the single one, a substantial overhead.

- Then, the storage device also has to process all those multiple I/Os too.

Random I/O on SSDs and HDDs

With Hard Disk Drives (HDDs), the penalty is worse because the extra disk head movement to gather the data from all those random I/Os is very time-consuming.

With Solid State Drives(SSDs), there is not the penalty of the disk head movement, but the penalty of the storage device having to process the multiple I/Os rather than a single one. In fact, storage manufacturers usually provide two benchmarks for their devices – Random I/O and Sequential I/O performance. (Learn more on how SSDs Degrade Over Time)

You will notice that the Sequential I/Os always outperform the Random I/Os. This is true for both HDDs and SSDs.

Sequential I/O always outperforms Random I/O on hard disk drives or SSDs.

Increasing I/O Performance

Enforcing Sequential I/Os to occur will get you optimal I/O performance, both at the Operating System level (less I/Os to process) and at the Storage level.

Unfortunately, the Windows File system tends to cause Random I/Os to occur when data is written out, then subsequently when that same data is read back in. The reason for this is when files are created or extended, the Windows File System does not know how large those creations/extensions are going to be, so it does not know what to look for in finding the best logical allocation to place that data so it can be written in one logical location (one I/O). It may just find the next available allocation which may not be large enough, so it has to find another allocation (another I/O) and keep doing so until all the data is written out.

The IntelliWrite® patented write optimization technology in DymaxIO fast data performance software solves this by providing intelligence back to the File System so it can find the best allocation, which helps enforce Sequential I/Os to occur rather than Random I/Os and enforcing optimal I/O performance.

Leave A Comment

You must be logged in to post a comment.